Mhc manifold Constrained hyper Connections

Table of Contents

Source: “mHC: Manifold-Constrained Hyper-Connections,” arXiv: arXiv:2512.24880.

Introduction

Background

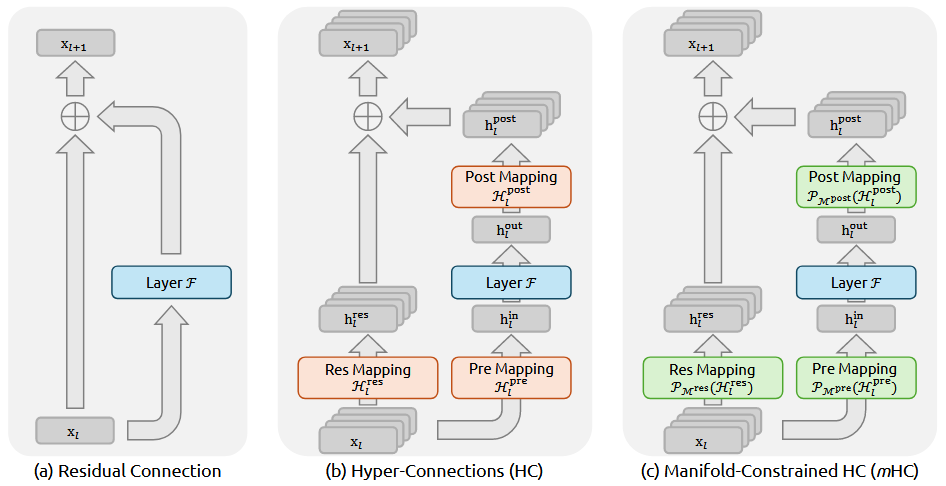

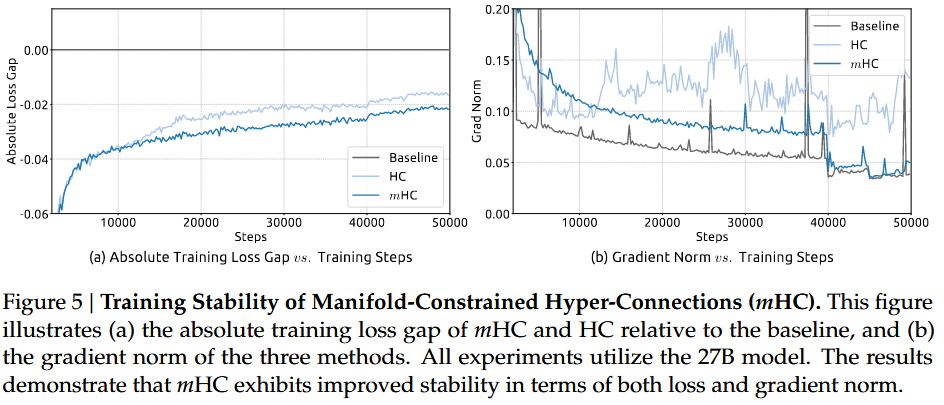

Deep neural network architectures have evolved significantly since the introduction of ResNets in 2016, with residual connections becoming a cornerstone of modern models like Transformers and large language models (LLMs). Hyper-Connections (HC) extended this paradigm by expanding the residual stream width and diversifying connectivity patterns, yielding performance gains but compromising identity mapping stability, leading to training instability and scalability issues. This paper addresses the need for stable, efficient macro-architectures in LLMs to support large-scale training without excessive memory overhead.

Objective

The primary goal is to develop Manifold-Constrained Hyper-Connections (mHC), a framework that constrains HC’s residual mappings to a doubly stochastic manifold using the Sinkhorn-Knopp algorithm, restoring identity mapping properties for stable signal propagation. Additionally, mHC incorporates infrastructure optimizations like kernel fusion, recomputing, and DualPipe communication to ensure efficiency, enabling scalable training of LLMs with minimal overhead.

Conclusion

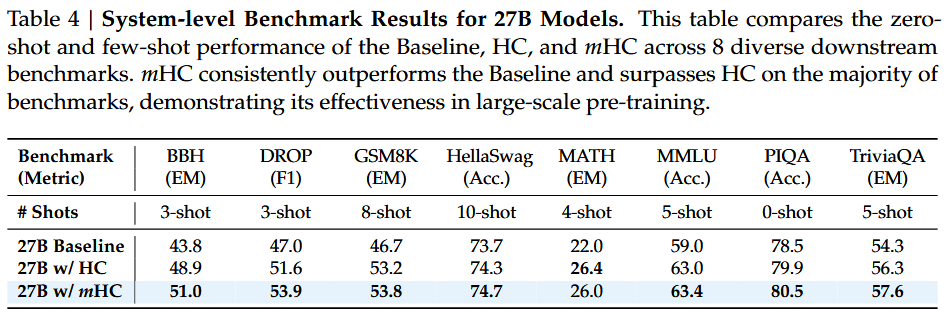

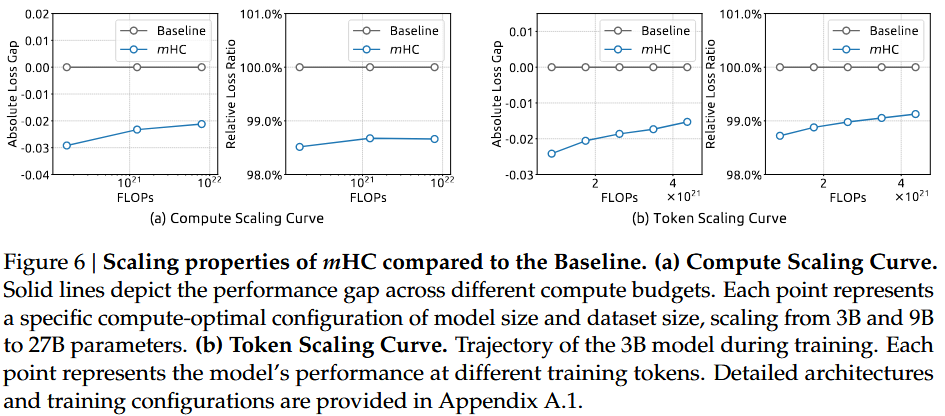

mHC successfully stabilizes HC by constraining residual mappings to a doubly stochastic manifold, achieving stable training with only 6.7% time overhead. It outperforms HC on multiple benchmarks and demonstrates superior scalability, contributing to deeper understanding of topological architecture design. The framework opens avenues for exploring diverse manifold constraints for future LLM architectures.

Literature Review

Macro-design in deep learning focuses on inter-block topological structures, with ResNet establishing residual connections as fundamental. Extensions like DenseNet, FractalNet, and DLA increased connectivity complexity. Recent works like HC, RMT, MUDDFormer, and Residual expand residual streams but often compromise stability. mHC builds on HC by constraining mappings to manifolds, addressing instability while maintaining expressivity, and differentiates from prior work through rigorous infrastructure optimizations for efficiency.

Methodology

mHC constrains HC’s residual mapping H_res to the Birkhoff polytope (doubly stochastic matrices) using the Sinkhorn-Knopp algorithm, ensuring norm preservation and compositional closure for stable propagation. Input and output mappings H_pre and H_post are constrained to non-negativity. For efficiency, kernel fusion combines operations, recomputing reduces memory footprint by discarding intermediates and recomputing on backward pass, and DualPipe overlapping optimizes communication in pipeline parallelism. Experiments use MoE-based LLMs with expansion rate n=4.

Experiment

Experiments on 3B, 9B, and 27B MoE models show mHC achieves stable training, mitigating HC’s loss surges and gradient explosions. mHC outperforms HC on downstream benchmarks, with gains on BBH and DROP, and maintains performance advantages across scales. Stability analysis reveals mHC’s gain magnitudes are bounded (max ~1.6) versus HC’s extreme values (up to 3000). Scalability curves indicate robust improvements with compute, and token scaling shows sustained gains. Limitations include slight deviations from perfect doubly stochasticity due to finite Sinkhorn iterations.

Reference

[1] Z. Xie et al., “mHC: Manifold-Constrained Hyper-Connections,” Jan. 05, 2026, arXiv: arXiv:2512.24880. doi: 10.48550/arXiv.2512.24880.