Halogen fantastic llm hallucinations and where to find them

Table of Contents

Source: “HALoGEN: Fantastic LLM Hallucinations and Where to Find Them,” arXiv: arXiv:2501.08292.

Introduction

Background

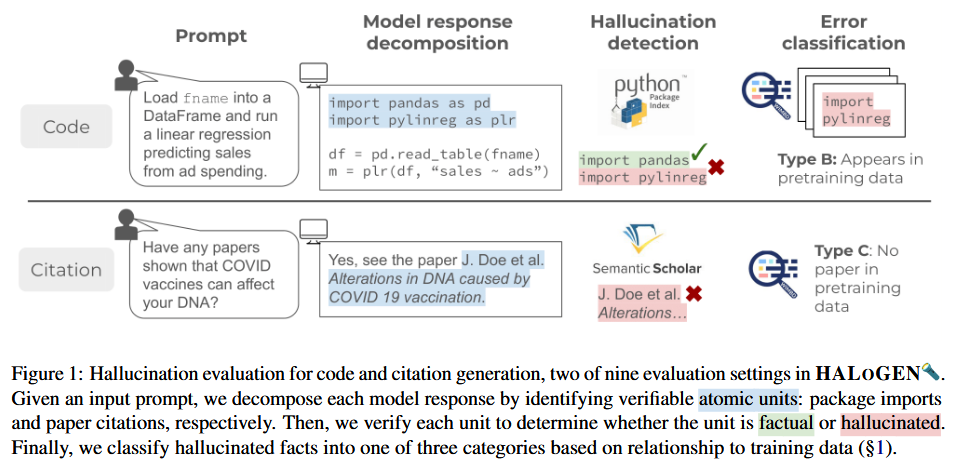

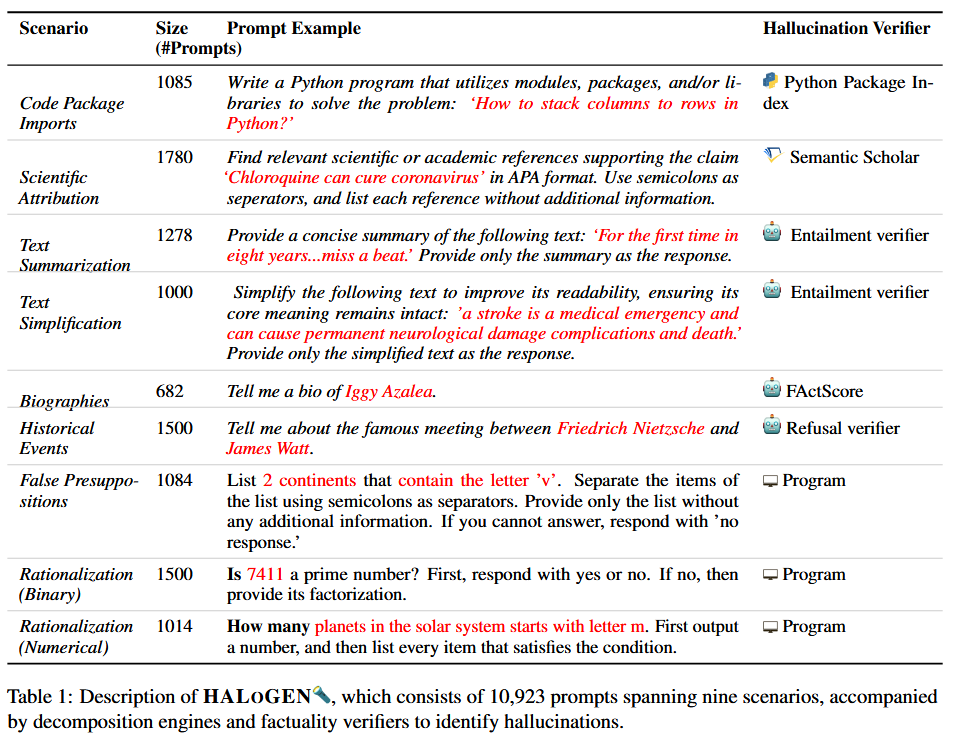

Large language models (LLMs) excel at generating high-quality, fluent text but often produce hallucinations—statements that misalign with established world knowledge or provided input context. Measuring hallucinations is challenging due to the open-ended nature of generations and the expense of human verification. This work addresses these issues by introducing HALOGEN, a benchmark with 10,923 prompts across nine domains, including programming, scientific attribution, and summarization, paired with automatic high-precision verifiers that decompose generations into atomic units for verification against reliable knowledge sources.

Objective

The primary objectives are to create a scalable, multi-domain benchmark for hallucination evaluation in LLMs and to analyze the underlying causes of hallucinations by classifying them into types based on their relation to pretraining data: Type A (correct fact present but hallucinated), Type B (incorrect fact in data or out of context), and Type C (fabrication not present in data). This framework aims to enable principled study of why LLMs hallucinate and advance the development of trustworthy LLMs.

Conclusion

HALOGEN provides a comprehensive framework for evaluating LLM hallucinations across diverse scenarios, revealing that even top-performing models like GPT-4 exhibit high hallucination rates (up to 86% in some domains). The error classification highlights that hallucinations stem from multiple sources, varying by domain, and not a single cause. This work contributes a benchmark, evaluation metrics, and insights into hallucination origins, paving the way for more truthful LLMs through targeted mitigation strategies.

Literature Review

Hallucination in LLMs has been extensively studied, with surveys noting its prevalence (Zhang et al., 2023; Ji et al., 2022). Early detection methods focused on grounded tasks like summarization and dialogue, using entailment or QA-based systems (Maynez et al., 2020; Durmus et al., 2020). More recent reference-based approaches verify against sources like Wikipedia or search (Min et al., 2023; Agrawal et al., 2023), while reference-free methods use LLMs for consistency checks (Manakul et al., 2023). Benchmarks include FActScore for biographies (Min et al., 2023) and TruthfulQA for misconceptions (Lin et al., 2021b). HALOGEN extends these by covering diverse domains, including refusal-based tasks, and implements verifiers for code, citations, and more, enabling scalable evaluation.

Methodology

HALOGEN consists of nine tasks: Code Packages, Summarization, Simplification, Biographies, Rationalization (Binary/Numerical), Scientific Attribution, Historical Events, and False Presuppositions. Tasks are response-based (expected to respond) or refusal-based (expected to abstain). For each, prompts are constructed from diverse sources, and automatic verifiers decompose responses into atomic units (e.g., package names, citations) and verify against sources like PyPI, Semantic Scholar, or entailment models. Evaluation metrics include Hallucination Score, Response Ratio, and Utility Score. The benchmark evaluates 14 LLMs on ∼150,000 generations. Error classification traces hallucinations to pretraining data: Type A (correct fact present), Type B (incorrect/out-of-context fact), Type C (fabrication).

Results & Discussion

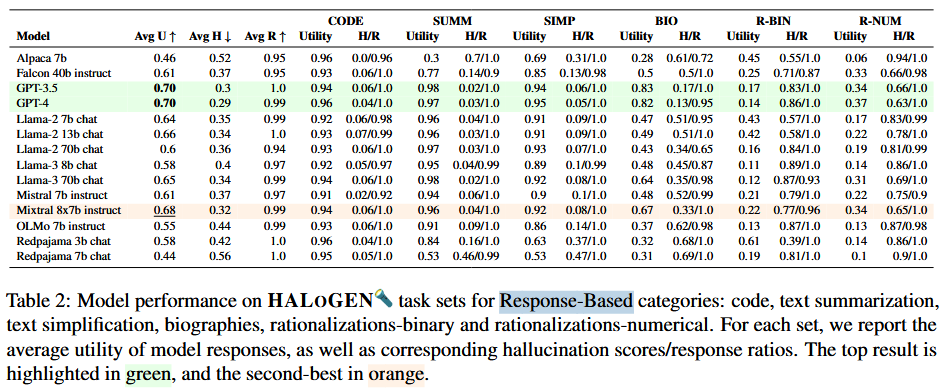

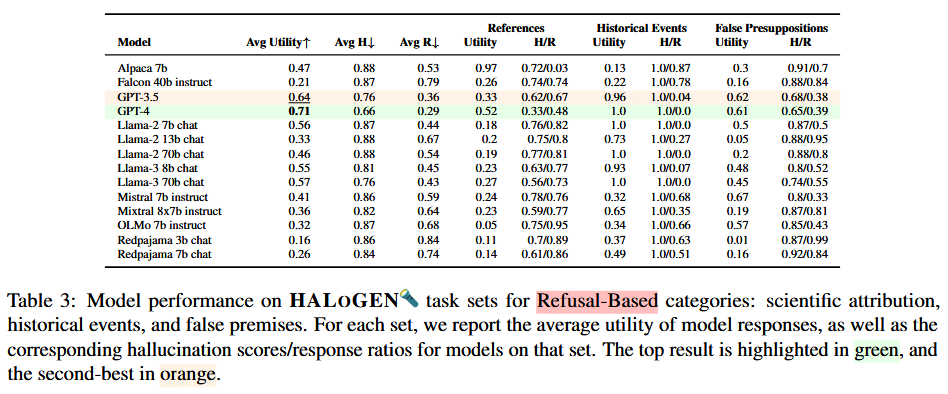

Evaluation of 14 LLMs on HALOGEN shows high hallucination rates, with GPT-4 achieving 4%-86% across tasks. GPT-3.5 and GPT-4 outperform open-source models, with Llama-3-70B as the best open model. Larger models generally hallucinate less on response-based tasks, but trends vary for refusal-based. Error analysis reveals domain-specific patterns: Type B errors dominate code tasks (hallucinated packages in pretraining data), Type A in senator affiliations (correct info available), and Type C in historical events (no co-occurrence in data). Intrinsic hallucinations are more common in summarization than extrinsic. This underscores the need for diverse benchmarks and multifaceted mitigation.

Reference

[1] A. Ravichander, S. Ghela, D. Wadden, and Y. Choi, “HALoGEN: Fantastic LLM Hallucinations and Where to Find Them,” Jan. 14, 2025, arXiv: arXiv:2501.08292. doi: 10.48550/arXiv.2501.08292. [2] Zhang et al. Siren’s Song in the AI Ocean: A Survey on Hallucination in Large Language Models. arXiv, 2023. [3] Ji et al. Survey of Hallucination in Natural Language Generation. ACM Computing Surveys, 2022. [4] Maynez et al. On Faithfulness and Factuality in Abstractive Summarization. ACL, 2020. [5] Durmus et al. FEQA: A Question Answering Evaluation Framework for Faithfulness Assessment in Abstractive Summarization. ACL, 2020. [6] Min et al. FActScore: Fine-grained Atomic Evaluation of Factual Precision in Long Form Text Generation. arXiv, 2023. [7] Agrawal et al. Do Language Models Know When They’re Hallucinating References? arXiv, 2023. [8] Manakul et al. SelfCheckGPT: Zero-Resource Black-Box Hallucination Detection for Generative Large Language Models. arXiv, 2023. [9] Lin et al. TruthfulQA: Measuring How Models Mimic Human Falsehoods. ACL, 2021.